AI Talking Robot That Speaks Bangla and English

Build a human-like AI talking robot with Arduino & Python that speaks Bangla and English with voice control and smart gestures.

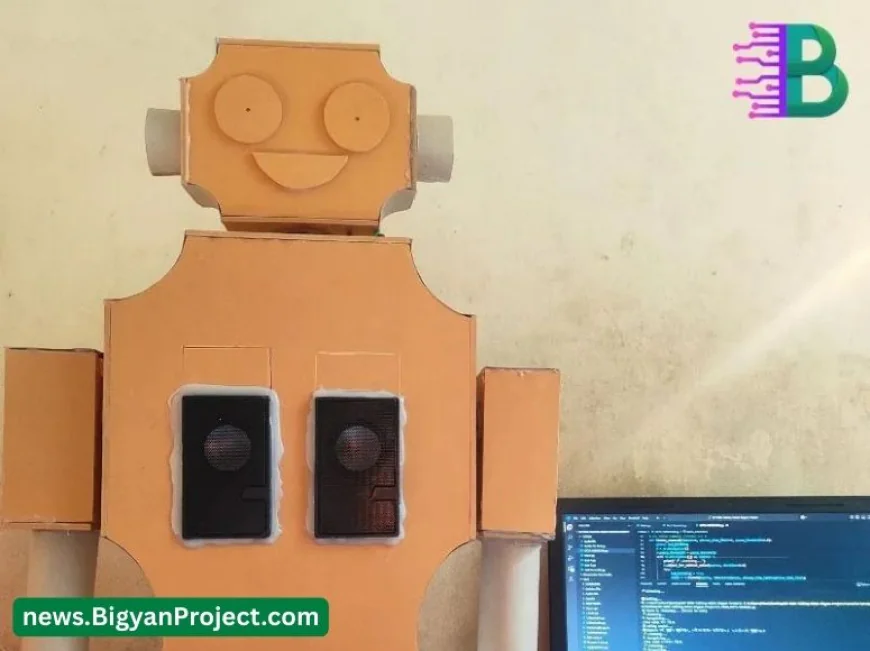

AI Talking Human Robot That Speaks Bangla and English

Bring natural, bilingual voice interaction to your classroom, lab, or demo booth with this smart human-like robot. Built on an Arduino Uno R3 SMD controller and a Python/C++ software stack, the robot listens through a 3.5 mm microphone and replies through twin mini speakers in clear Bangla and English. Head and hand gestures are driven by metal-gear servos for expressive movement, while LED eyes provide instant status feedback. This project is designed for learning, demonstration, and innovation, and it’s supported by detailed documentation, community tips, and parts available from Bigyan Project (বিজ্ঞান প্রজেক্ট).

Explore the full build log, updates, and media on the official project page: Talking Human Robot with AI & Voice Control. Components and upgrade parts are available at the Bigyan Project store.

Product Specifications

| Body Material | 5 mm Plastic PVC Board |

|---|---|

| Hand Movement | 2 × MG995 180° Metal Gear Servo Motors |

| Head Movement | 1 × MG90S Metal Gear Servo Motor |

| Eye Indicator | 2 × 3 mm LED status lights |

| Main Controller | Arduino Uno R3 SMD Development Board |

| Audio Input | 3.5 mm Microphone |

| Audio Output | 2 × Mini Sound Boxes (speakers) |

| Programming Languages | Python and C++ |

| Language Support | Bangla and English voice interaction |

| Response System | Predefined answers + AI-based replies |

| Recommended Power | Separate regulated 5–6 V supply for servos; 5 V for logic (use common ground) |

| Dimensions | Depends on enclosure design; PVC panels cut to project requirements |

| Connectivity | USB serial between Python host and Arduino |

Features

- Natural two-way voice interaction in Bangla and English

- Human-like gestures via metal-gear servos for hands and head

- LED eye indicators that reflect listening, speaking, and idle states

- Modular codebase in Python and C++ for easy customization

- Mixed reply system with predefined responses plus AI-generated answers

- Durable 5 mm PVC body suitable for classroom handling and demos

- Clear upgrade path with easily available parts from Bigyan Project

- Works as a learning kit for speech, robotics, and embedded systems

Applications / Use Cases

- Science fairs and student showcases with bilingual interaction

- STEM classroom demonstrations of speech, NLP, and mechatronics

- Museum or event information kiosks requiring Bangla and English

- Retail greeter or visitor assistant for local language engagement

- Research prototype for human-robot interaction and dialogue design

- Community workshops and maker-space learning programs

User Guide / How to Use

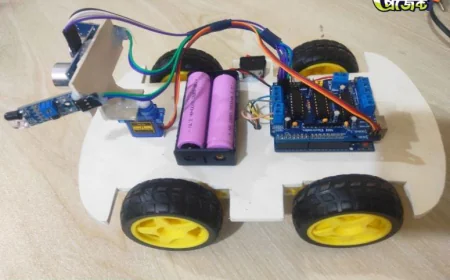

- Assemble the frame by cutting 5 mm PVC panels to size and mounting the MG995 hand servos and the MG90S head servo with suitable brackets.

- Wire the electronics. Power servos from a dedicated 5–6 V regulated supply, power Arduino from USB or a regulated 5 V source, and connect all grounds together. Connect LED eyes to Arduino digital pins with resistors.

- Connect the 3.5 mm microphone to the audio interface on the host device or microcontroller setup used for Python speech processing. Connect the mini sound boxes to an amplifier or audio output.

- Upload the Arduino sketch that listens to serial commands and drives the servos and LEDs. Define pins, servo limits, and simple motion presets for gestures.

- Install the Python environment on the host computer. Set up speech-to-text, text-to-speech, and the dialogue logic that maps recognized intents to responses and motion commands.

- Link Python to Arduino via serial. Test round-trip by sending a sample gesture command and blinking the LED eyes on cue.

- Calibrate servo ranges to avoid mechanical binding. Adjust microphone gain to reduce noise and improve recognition.

- Run the main application. Speak a Bangla or English phrase. Confirm the robot listens, processes the intent, replies via speakers, and performs a matching gesture.

Frequently Asked Questions (FAQs)

- Q: Does the robot speak both Bangla and English out of the box?

A: Yes, the project is designed for bilingual interaction. Provide the required speech models and TTS voices in the Python environment to enable both languages. - Q: Can it work offline for privacy?

A: Offline operation depends on the chosen speech-to-text and text-to-speech engines. Select offline-capable models to keep audio processing on the device. - Q: What power supply should I use for the servos?

A: Use a regulated 5–6 V supply with adequate current for two MG995 servos and one MG90S, and keep servo power isolated from logic power while sharing ground. - Q: How do I prevent servo jitter when the robot is speaking?

A: Use separate power rails, add decoupling capacitors, and route audio and servo wiring to minimize interference. - Q: Is the code beginner-friendly?

A: The Arduino sketch and Python scripts are structured for learning, with clear sections for STT, TTS, intent handling, and serial commands. - Q: Can I add a wake word?

A: Yes, integrate a lightweight wake-word engine to start listening mode before capturing speech, improving accuracy in noisy rooms. - Q: Where can I get parts and upgrades?

A: Components, kits, and accessories are available from Bigyan Project (বিজ্ঞান প্রজেক্ট). - Q: Is there a detailed build reference?

A: See the official project page for updates, media, and notes: Talking Human Robot with AI & Voice Control.

Challenges and Considerations

- Environmental noise can reduce recognition accuracy; use a quality mic and noise reduction

- Servo torque and heat under continuous motion; consider cooling periods

- Power isolation is essential to prevent resets and audio artifacts

- Latency depends on model size and hardware; optimize for faster responses

- Mechanical alignment of servo horns and linkages to avoid binding

- Clear safety practices when demonstrating around students or crowds

Compatibility

- Arduino Uno R3 SMD and compatible boards for motion control

- Python environment on Windows, macOS, or Linux for speech processing

- Standard 3.5 mm microphones and line-level speaker amplifiers

- Common LED drivers and resistors for eye indicators

- Serial communication over USB between host and Arduino

Future Enhancement Options

- Wake-word detection for hands-free activation

- Facial tracking or object detection to trigger gestures

- On-device, fully offline Bangla and English speech models

- Battery power module with safe charging and power monitoring

- Additional servos for more expressive arm, wrist, or neck movements

- Custom 3D-printed parts for smoother linkages and enclosures

Benefits

- Engages audiences with natural bilingual voice and expressive motion

- Teaches core concepts in speech, AI, electronics, and mechanics

- Modular design makes upgrades and repairs straightforward

- Supported by accessible components from Bigyan Project

- Ideal for science fairs, classrooms, and public demos

- Scales from basic predefined replies to AI-driven conversations

For parts, kits, and guidance, visit the Bigyan Project store. To follow progress, media, and updates, bookmark the official project page: Talking Human Robot with AI & Voice Control.

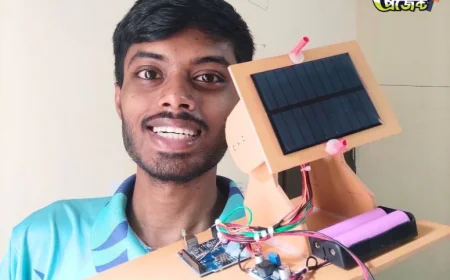

Intro / Lead Paragraph

A team of young innovators from Bigyan Project (বিজ্ঞান প্রজেক্ট) has developed a smart talking human-like robot in Bangladesh. The robot can listen and speak in both Bangla and English, using AI, Arduino, and Python, to make science learning and human–robot interaction more engaging for students and educators.

Background Context

Voice-controlled robots are increasingly relevant as societies adopt smart assistants and AI-powered devices. However, most systems only support English, leaving a major gap for Bangla-speaking communities. This project addresses that gap by enabling natural, bilingual interaction at an affordable cost, making advanced AI robotics accessible for classrooms, science fairs, and research in Bangladesh.

Project Details

The talking human robot is built with a 5 mm PVC structure, MG995 and MG90S servos for movement, and LED eyes for status indication. Its brain is an Arduino Uno R3 board connected to a Python-powered AI system. A microphone captures voice commands while mini speakers deliver replies. The system combines predefined answers with AI-generated responses, offering a balance of reliability and flexibility. According to the student developers, “We wanted to create a robot that not only moves like a human but also understands and speaks our own language.”

Research / Innovation Angle

What makes this project unique is its bilingual capability in Bangla and English. Unlike many voice-controlled robots that depend on costly or English-only software, this system integrates open-source tools with affordable hardware. It also demonstrates a hybrid approach where Arduino manages mechanical movements while Python handles speech recognition and natural language processing. The project contributes to the growing body of work on human–robot interaction in multilingual contexts.

Impact and Applications

The AI talking robot can serve multiple purposes in education, science outreach, and industry. Schools and universities can use it as a teaching aid for robotics, programming, and AI. Science fair participants can showcase it as a demonstration of applied innovation. Museums and events can deploy the robot as a bilingual guide or greeter. In the future, it may even be adapted for healthcare and customer service, offering accessible communication in local languages.

Quotes & Voices

Student Developer: “Our goal was to prove that robotics and AI are not limited to English. By giving the robot the ability to speak Bangla, we made technology more inclusive.”

Teacher Mentor: “Projects like this show how students can combine coding, mechanics, and artificial intelligence into something practical. It inspires the next generation of engineers.”

Industry Expert: “A bilingual talking robot is a step forward for human–robot communication. It demonstrates the potential of AI in emerging markets like Bangladesh, where local language support is crucial.”

Conclusion

The bilingual talking human robot from Bigyan Project is more than a science fair exhibit—it is a symbol of how young innovators can bridge global technology with local needs. With future upgrades such as wake-word detection, facial tracking, and fully offline speech models, the project can evolve into a powerful tool for research and community engagement. As one student summed it up: “We believe robots should speak the language of the people, not the other way around.” This project highlights the creativity and determination of the next generation of scientists in Bangladesh.

What's Your Reaction?

Like

2

Like

2

Dislike

0

Dislike

0

Love

2

Love

2

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

2

Wow

2